Author - Rajesh Thota & Hukum Chandu Rokkala

The modernization imperative

If you've spent any time in a data engineering or analytics leadership role over the past couple of years, you already know: the ground is shifting—fast. Legacy platforms that once served us well are now increasingly expensive to maintain, difficult to scale, and stubbornly resistant to the kind of agility today's business demands. Meanwhile, Microsoft Fabric has emerged as a compelling, unified analytics platform that consolidates data engineering, warehousing, real-time analytics, data science, and business intelligence under a single roof.

The promise is clear. But here's the catch—migration is rarely simple. Whether you're running ETL on Informatica, orchestrating workflows in Alteryx, managing SSIS packages, or visualizing insights in Tableau, shifting those workloads to a new platform involves more than just a lift-and-shift. It demands deep understanding of source logic, careful mapping to target services, thorough validation, and often, a willingness to rethink long-standing patterns. That's a lot of manual effort, billable hours, and project risk.

This is exactly the problem we set out to solve with IntelliConvert.

What is IntelliConvert?

IntelliConvert is Sonata Software's homegrown migration accelerator—a suite of purpose-built converters designed to automate and de-risk the journey to Microsoft Fabric and the broader Azure data ecosystem. Rather than approaching each migration as a greenfield consulting engagement,IntelliConvert brings battle-tested automation to the table, dramatically reducing manual effort, compressing timelines, and delivering measurable cost savings from day one.

Think of it as the difference between hand-drafting every blueprint from scratch and using a precision engineering tool that understands the source, maps to the target, generates production-ready output, and validates the result—all while your team stays focused on what matters most: business outcomes.

The IntelliConvert suite covers a wide spectrum of migration and modernization scenarios.

Let's walk through each of these converters to understand what they do, how they work, and—most importantly—what kind of results real enterprises are seeing.

1. Alteryx → Microsoft Fabric

Alteryx has long been a favorite for data analysts who need powerful, desktop-based workflow authoring without writing code. But as organizations scale, the limitations become hard to ignore: per-seat licensing costs pile up, governance gets tricky, and desktop workflows don't lend themselves to cloud-native orchestration.

IntelliConvert's Alteryx-to-Fabric converter automates the discovery of existing Alteryx workflows—extracting logic, transformations, and dependencies—and intelligently maps them to Fabric-native services. The output? Production-ready PySpark notebooks and low-code Fabric assets that preserve the original business logic while unlocking the scalability and cost efficiency of Fabric.

Built-in validation ensures that converted pipelines produce results consistent with the original workflows, giving teams confidence that nothing falls through the cracks.

- Impact: 30–40% reduction in analytics costs | 70% less migration effort | $300K+ annual savings | 10–20× faster migration

2. SQL Server → Microsoft Fabric

SQL Server remains the backbone of countless enterprise data platforms. But migrating hundreds of stored procedures, views, and complex T-SQL to a modern lakehouse or warehouse environment is notoriously time-consuming—and error-prone when done manually.

Our SQL Server-to-Fabric converter uses AI-powered, AST-based parsing to understand complex T-SQL at a structural level—not just pattern matching. It converts stored procedures, functions, and views into production-ready Fabric Lakehouse (PySpark) or Fabric Warehouse assets. Performance anti-patterns like cursor-based logic are automatically refactored to set-based operations. And because data privacy matters, validation runs locally via on-premise LLM checks—no sensitive code ever leaves your environment.

- Impact: 95–98% PySpark automation accuracy | 85–90% Warehouse automation

3. Informatica → Microsoft Fabric

Informatica PowerCenter has been the ETL workhorse for many large enterprises. But with Microsoft Fabric offering Gen2 Dataflows and unified pipeline capabilities, the incentive to consolidate is strong—especially when licensing renewal conversations come around.

IntelliConvert's Informatica-to-Fabric converter takes PowerCenter mappings and automatically converts them into production-ready Fabric Gen2 Dataflows. It supports 16 core transformations out of the box, uses AI-driven analysis to handle complex mapping logic, and enforces enterprise-grade security throughout the process.

The result: what used to take months of painstaking manual mapping now happens in a fraction of the time—with fewer errors and significantly lower risk.

- Impact: 10× faster migration | 70–90% cost savings | Reduced migration risk

4. SSIS → Spark (Fabric / Databricks)

SSIS packages have been the go-to ETL mechanism for Microsoft shops for over a decade. But those .dtsx packages were built for a world of on-premise SQL Servers and always-on infrastructure. Moving to a pay-for-use, cloud-native model means rethinking how those packages run.

IntelliConvert parses SSIS packages—control flow, data flow, connection managers, and task dependencies—and converts them into Spark-based patterns compatible with Microsoft Fabric or Databricks. Connection managers are migrated to Spark-compatible data sources, and the resulting notebooks follow cloud-native best practices.

- Impact: Lower infrastructure costs | 50–60% Faster migration timelines | Pay-for-use economics replace always-on server costs

5. NiFi → Azure Data Factory / Databricks Jobs

Apache NiFi is widely used for data ingestion and flow-based programming, particularly in Hadoop-centric environments. But as organizations move to Azure, those NiFi processor graphs need a new home.

Our NiFi converter translates processor graphs into ADF activity chains or Databricks Jobs, mapping NiFi processors to their Azure-native equivalents—routing, file movement, REST calls, and more. Legacy HDFS paths are automatically rewritten to ADLS or DBFS, and an AI-assisted validation step generates a comprehensive migration dossier for audit and compliance purposes.

- Impact: ~70% automated conversion | 50–60% faster timelines | Lower rework through deterministic processor-to-activity mappings

6. PySpark modernization (Hadoop → Databricks / Fabric)

Many organizations running Cloudera or other Hadoop distributions have accumulated years' worth of PySpark scripts—often written against older APIs, referencing HDFS paths directly, and carrying forward patterns that don't translate well to Databricks or Fabric.

IntelliConvert's PySpark modernizer uses AST-based parsing to upgrade scripts at a structural level: normalizing SparkSession usage, modernizing storage paths from HDFS to DBFS or ADLS, upgrading RDD-based code to DataFrame APIs, and running streaming and performance checks. An optional LLM-powered code polish step cleans up readability and adds inline documentation.

- Impact: ~80% automated conversion | 40–50% faster cloud readiness | Lower performance tuning effort post-migration

7. Scala Spark modernization (Hadoop → Databricks / Fabric)

Scala Spark workloads present their own set of modernization challenges—API compatibility, runtime differences, and governance requirements like Unity Catalog integration. IntelliConvert addresses these with AST-based refactoring that updates APIs, modernizes storage references, detects anti-patterns, and aligns output with Databricks or Fabric best practices.

AI-powered validation rounds out the process, catching edge cases and ensuring production readiness.

- Impact: ~75% automated conversion (batch) | 30–40% reduced refactoring effort | Fewer production incidents post-migration

8. Hive SQL → Databricks / Fabric SQL (Delta)

Hive has been the SQL layer of choice for many Hadoop environments. But Delta Lake offers significant advantages in performance, ACID compliance, and governance. The challenge? Hive DDL/DML, custom functions, storage formats, and path references all need to be translated.

IntelliConvert handles this end-to-end: converting Hive SQL to Delta-compatible syntax, normalizing functions, modernizing table and storage definitions, and providing AI-validated guidance on optimization opportunities specific to Delta.

- Impact: ~85% automated conversion | 40–60% faster timelines | Cost savings from Delta-specific optimizations

9. Tableau → Power BI

For organizations standardizing on the Microsoft analytics stack, migrating Tableau workbooks to Power BI is a common—and often painful—exercise. Manual recreation of dashboards, calculated fields, and data source connections is tedious and error-prone.

IntelliConvert automates this migration with high fidelity, preserving workbook structure, visual layout, and analytical intent. Data source connections are translated to Power BI equivalents, and the output is ready for review and deployment without starting from scratch

- Impact: 60–70% reduction in migration effort | Significant licensing savings through platform consolidation

10. SSRS → Power BI (paginated and interactive)

SSRS reports—especially paginated, parameter-heavy operational reports—represent a significant body of work in many enterprises. Simply abandoning them isn't practical; rebuilding them manually is expensive.

IntelliConvert modernizes SSRS by converting reports to Power BI Paginated Reports (preserving layouts, parameters, and datasets) while also enabling conversion to interactive Power BI reports where it makes sense. The converter handles backend data source translation, ensuring that the migrated reports connect seamlessly to their data.

- Impact: Faster Report modernization | Reduced manual rebuild effort | Higher analytics adoption through modern UX

11. Standalone Power BI → Microsoft Fabric

Already on Power BI but not yet on Fabric? The journey to Fabric isn't just about flipping a switch. Workspaces, datasets, semantic models, dataflows, and reports all need to be assessed, migrated, and validated in the context of Fabric's unified governance and compute model.

IntelliConvert automates the migration of workspaces, datasets, and reports to Fabric, translating standalone dataflows into Fabric-native pipelines. The result is a unified analytics environment with stronger governance, lower operational overhead, and a faster path to Fabric's advanced capabilities—all without disrupting ongoing operations.

- Impact: Accelerated Fabric adoption | Operational continuity during migration | Unified governance from day one

Why IntelliConvert? The bigger picture

Each converter in the IntelliConvert suite addresses a specific migration scenario. But the real power lies in the platform-level thinking behind it:

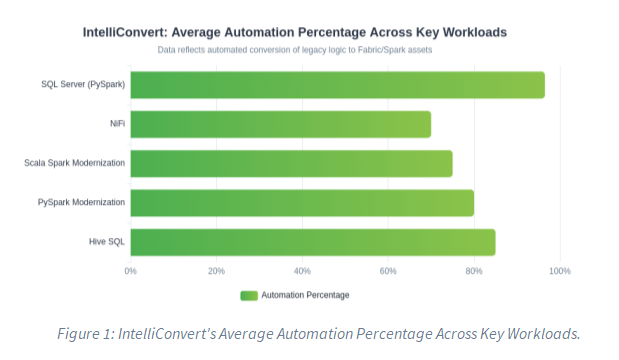

- Automation over manual effort: Every converter is designed to maximize automated conversion rates—typically 70–95%—so your team spends time on edge cases and business logic, not rote translation.

- Speed without shortcuts: Compressed timelines don't come at the cost of quality. AST-based parsing, AI-powered analysis, and automated validation ensure that speed and accuracy go hand in hand.

- Security by design: On-premise LLM validation, enterprise-grade security, and zero data exfiltration ensure that sensitive code and data stay where they belong.

- Breadth of coverage: From ETL engines to BI tools, from Hadoop to Fabric, IntelliConvert covers the full spectrum of a typical enterprise data estate modernization.

Ready to start?

Migration doesn't have to be a multi-year, budget-busting exercise. With IntelliConvert, we've seen enterprises go from initial assessment to production-ready Fabric workloads in weeks, not months—with measurable cost savings from the very first sprint.

If you're evaluating Microsoft Fabric, or if you're already on the journey and looking for ways to accelerate, we'd love to show you what IntelliConvert can do. Let's have a conversation.

Get in touch with the Sonata Software team to schedule a demo or assessment.

Disclaimer: The metrics and savings figures referenced in this blog are based on assessments conducted across real-world migration engagements. Actual results may vary depending on the complexity of the source environment, data volumes, and organizational readiness.